Translating Between Brain and World: Decoding Biological Neural Nets with Artificial Neural Nets

Originally written by Nick Leiby, Scott Novotney and Jed Singer

We do a lot of work at Two Six Labs on artificial neural networks, from image recognition to anomaly detection. For one of our programs, DARPA’s Synergistic Discovery and Design (SD2), we use machine learning to accelerate laboratory research and generate scientific insights. We’ve designed protein sequences, predicted the stability of photovoltaic crystals, and, for one project, modeled real, biological neural networks.

Reading a mouse’s mind

A brain is made of interconnected neurons: cells that activate (or “fire”) in response to inputs and, in turn, activate other neurons. Simplified versions of these systems were the inspiration for the first artificial neural networks. Our collaborators in the Schnitzer Lab at Stanford produced a data set that monitors a mouse’s neural activity in the lab as it moves around an “arena”. In this case, a box with stickers for landmarks. They did this by attaching a tiny microscope to the mouse’s head and recording the fluorescence of a dye that makes individual neurons glow green when they fire. This technology allows the Schnitzer to track hundreds or thousands of neurons at once.

We focused on neurons in the CA1 region of the mouse’s hippocampus, a part of the brain involved in learning, memory, and navigation. Some of the neurons in this region are known as “place cells” because they fire in response to the mouse’s location. For example, a given cell might only fire when the mouse is in the top left corner of the enclosure. The mouse’s brain encodes a concept of position by interpreting the combined signal of these cells’ activity or inactivity.

We asked the question, “Can we use an artificial neural network to link the signals of these biological neurons to a map of the mouse’s physical location?” That is, if we reverse engineer the biological neural network, can we read a mouse’s mind to know where it is?

Artificial neural nets accurately predict location from biological neuron activity

We trained a neural network to predict the mouse’s position given recent neuron firing patterns. We used the first 80% of observations of an experimental trial as training data, and given just the neuronal activity, predicted the mouse’s location for the final 20% of the observations. We tried a number of model architectures, but a simple dense neural network with a regression output layer performed really well, giving an average prediction error of 4 cm. For a sense of scale, a mouse is about 8 cm long, and the arena is a rectangle about 45×60 cm. This looping animation shows our predictions (blue dot) and the labeled position of the mouse (red dot).

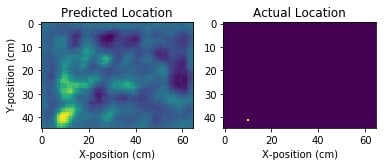

The regression output performs well, but it doesn’t convey any information about our certainty on the prediction, or about alternative predictions. We designed another deep neural net model, this time including convolutional layers. We divided the arena into a 1 cm grid, and trained for a classification task that predicts the grid square in the arena the mouse occupies. Each square in the grid is assigned a probability that the mouse is there, giving us a heatmap of our prediction strength.

However, since the label of the mouse’s actual location is a single grid square (the center of the mouse), we needed to develop a novel loss function that trains our model to understand a nearly-correct prediction is better than a far-off prediction. Once we developed this, the model performed similarly to the point prediction model, with an average error of 5 cm. However, it included much more information about alternative predictions and the model’s certainty. The blue cloud in the video represents the areas of the arena with the highest predicted probability that the mouse is there.

Predicting future behavior – a mouse’s and ours

With this concept of uncertainty encoded into our predictions, we can also ask, “Can we read the mouse’s mind to predict where it will be in the future?” Instead of looking at the recent pattern of neuronal firing and asking where the mouse is now, we ask where it will be 1, 2, or 3 seconds in the future. Our predictions break down the further out we look, but they still perform well compared to baselines.

The data we analyzed represented very simple mouse behavior: a mouse moving around in a box. Even so, we could segment the data into 2 behavior categories: ‘active’ and moving, or stationary and ‘dwelling’ in-place. We were able to predict the current behavior of the mouse with 75% balanced accuracy and still had 66% accuracy 2 seconds into the future. This suggests that the hippocampal neurons we’re modeling don’t just encode information about the present, but some degree of planning for the future.

Our collaborators in the Schnitzer Lab are working to produce more complex behavioral data sets to which we can apply these same methods. It would be interesting to see whether we can map a mouse as it navigates through a maze, predicting left and right turns and quantifying the mouse’s uncertainty as it learns a maze. Or perhaps we will be able to apply these same methods to identify which thematic image stimuli a mouse is being exposed to. Just as we use mice as research models to learn more about ourselves as humans, hopefully our artificial neural networks will help us better understand biological neural networks.

This project is a fun example of how we’re able to apply machine learning techniques, both well-researched and more experimental, to help make progress in cutting-edge laboratory research.